AI Governance Strategic Visibility: A Checklist for Kansas City’s Regulated Firms

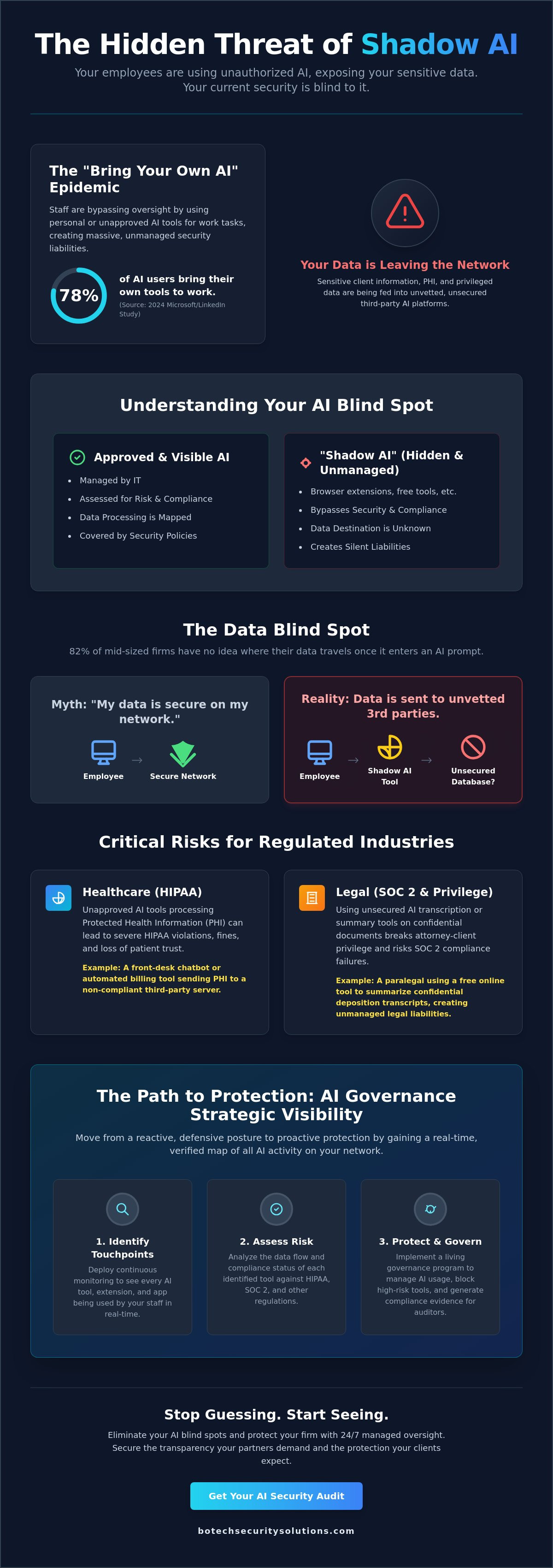

Your employees are likely feeding sensitive client data into unauthorized AI tools, and your current security stack is blind to it. Gaining ai governance strategic visibility is the only way to stop this "Shadow AI" from creating unmanaged liabilities. A 2024 study by Microsoft and LinkedIn revealed that 78% of AI users bring their own tools to work, often bypassing oversight. You cannot protect a Kansas City practice if you don't have a map of where the data is going.

It's exhausting to keep pace with technology while trying to satisfy the strict requirements of HIPAA or SOC 2. You likely feel the pressure of an upcoming audit and the confusion of complex technical jargon. This article provides the framework you need to identify hidden risks before they become breaches. We'll provide a clear path to move from data anxiety to a state of documented, enterprise-grade protection.

We're going to dismantle the myths surrounding AI safety and look at the hard realities of regulated environments. We'll walk through a specific checklist designed to align your AI usage with federal standards. You'll finish this piece with a concrete plan to give your partners the transparency they demand and the security your clients expect.

Key Takeaways

- Stop assuming your team isn’t using AI; unauthorized "Shadow AI" tools are likely already processing sensitive client data within your local network.

- Gain true ai governance strategic visibility by identifying every hidden AI touchpoint to prevent silent compliance failures and data leaks.

- Discover why a static PDF policy is a liability and how to transition to a living governance program that generates the evidence regulators demand.

- Follow a pragmatic, step-by-step checklist to audit your endpoints and protect attorney-client privilege or PHI from unsecured AI transcription tools.

- Learn how to bridge the gap between enterprise-grade security and your small business budget by implementing managed oversight that monitors AI risks 24/7.

What is AI Governance Strategic Visibility for Kansas City Businesses?

Kansas City business owners often assume their IT teams have a handle on every piece of software running in the office. This assumption is a dangerous myth that leaves your sensitive data exposed to unseen risks. True ai governance strategic visibility is not about reading a software manual or trusting a vendor's promise. It is the tactical ability to see every single AI touchpoint across your entire local network in real-time.

For a local firm, this visibility means knowing exactly which employee is feeding client data into a browser extension or a third-party summary tool. Most are not aware that these "helpful" tools often store your data in unsecured external databases. To better understand the gravity of these oversight gaps, watch this helpful video:

Large corporations often hire massive teams to manage enterprise-level AI oversight, but a Kansas City medical clinic has different needs. You do not need a hundred-page policy; you need a pragmatic view of how your front-desk chatbot or automated billing tool interacts with protected health information. We focus on Managed Security that bridges the gap between high-end protection and the reality of a busy local practice.

There is an uncomfortable truth that most vendors avoid: 82% of mid-sized firms in the metro area have no idea where their data travels once it enters an AI prompt. You might think your data is private, but without a verified map of your digital traffic, you are just guessing. This lack of clarity is why visibility is the non-negotiable prerequisite for any serious HIPAA or SOC 2 compliance effort.

The Local Regulatory Landscape in the KC Metro

The legal environment in the Midwest is shifting rapidly. Missouri and Kansas data privacy expectations are evolving toward much stricter enforcement by 2026. If you operate in a regulated sector, you are already bound by the HIPAA Administrative Safeguards under Section 164.308, which requires a formal risk analysis of all technology. Most are not compliant with this section because they cannot produce evidence of where their AI tools are actually processing data.

Effective AI Governance requires more than just a signed document in a drawer. It requires a living system that identifies new AI deployments as they happen. If you cannot see the tool, you cannot assess the risk, and you certainly cannot prove compliance to an auditor or a state regulator. Organizations that cannot afford to get this wrong must move beyond "checked boxes" and toward actual technical verification.

Visibility vs. Control: Why You Need Both

Think of visibility as the map and governance as the rules of the road for your firm. You can have the best rules in the world, but they are useless if you do not know where your staff is driving. Consider a typical Overland Park law firm where a paralegal uses an unapproved AI tool to summarize confidential deposition transcripts. This is "shadow AI," and it creates unmanaged legal liabilities that your standard professional liability insurance likely will not cover.

When you achieve ai governance strategic visibility, you eliminate these blind spots. You gain the power to see a violation before it becomes a headline or a lawsuit. It allows you to move from a defensive, reactive posture to a state of proactive protection. AI strategic visibility is the clear line of sight between AI execution and executive accountability.

The Shadow AI Problem in Kansas City Healthcare and Legal Firms

Your staff is already using AI. Whether it is a paralegal in Lee's Summit summarizing a deposition or a billing clerk in Tulsa cleaning up spreadsheet data, these tools are already inside your four walls. Most firm owners believe they have absolute control over their software stack, but the reality is that "Shadow AI" operates silently in the background of every browser and mobile device. Without ai governance strategic visibility, you aren't just behind the curve; you are actively leaking protected data to third-party servers you don't own and cannot audit.

The risk is not theoretical. When an employee pastes a confidential contract into a free LLM to "make it sound more professional," they are effectively publishing that data to a public cloud. For law firms, this is a direct violation of attorney-client privilege. For healthcare practices, it is a fast track to a HIPAA violation. Organizations that cannot afford to get this wrong must realize that if they haven't performed a forensic audit of their team's browser extensions and mobile apps, they are likely out of compliance right now.

Real-World Scenario: The Compromised Patient Record

A mid-sized clinic near Bentonville recently discovered a staffer was using a free AI transcription tool to process patient intake notes. They thought they were being efficient. In reality, that tool was sending Protected Health Information (PHI) to an unencrypted server located outside the United States. This action violated the HIPAA Security Rule 45 CFR § 164.308(a)(1)(ii)(A), which requires a rigorous risk analysis of all data handling processes. Under current OCR enforcement standards, a lack of ai governance strategic visibility can lead to "willful neglect" fines, which often exceed $60,000 per violation according to 2024 adjusted penalty tiers.

Why Basic IT Support Fails at AI Governance

Most managed it support providers focus on "keeping the lights on" rather than securing the invisible data flow. They check your firewall and update your Windows patches, but they rarely monitor the specific API calls made by a rogue Chrome extension. This is the difference between passive monitoring and Vigilant Guardianship. We take a veteran-owned approach to security that treats every data exit point as a potential breach site.

To build a framework that actually holds up under scrutiny, many firms look toward the AI Guide for Government for a structured approach to risk management. It provides a baseline for what "reasonable" security looks like in a regulated environment. If you want to find out where your firm actually stands, you need more than a basic IT checklist. You need a partner that understands the high-stakes reality of regulated data and the binary nature of security.

Compliance Program vs. Document: Achieving True Strategic Visibility

Most Kansas City firm owners believe a signed PDF sitting in a SharePoint folder constitutes a governance strategy. It doesn't. A static document is a liability because it promises protections you aren't actually delivering to your clients or regulators. Real ai governance strategic visibility requires a living system that generates technical evidence every hour of every day. Most are not prepared for the scrutiny that follows an AI-related data leak.

A policy is just a statement of intent; a program is a mechanism for enforcement. If your policy says you don't allow sensitive patient data in public AI tools, but you have no way to see what your staff is typing into a browser, your policy is worthless. You need a system that bridges the gap between what you say you do and what your logs prove you are actually doing. This is the binary reality of modern compliance where you are either protected or you are vulnerable.

The Audit-Ready Evidence Trail

A financial firm in Oklahoma City cannot simply tell an auditor they have an AI oversight process. By 2026, SOC 2 auditors will transition from asking for annual screenshots to demanding proof of "continuous monitoring" for all AI models. You need specific logs that track prompt history, data egress alerts, and model versioning records to survive a professional review. This is the fundamental difference between "we have a policy" and "we have the logs to prove it."

Organizations that cannot afford to get this wrong must automate their data collection. Relying on manual spreadsheets for compliance is a recipe for failure during a high-stakes audit. You can find out how to implement these automated systems through BoTech Compliance Services to ensure your evidence trail is always current. We build the infrastructure so you don't have to hunt for logs when a regulator knocks on your door.

Strategic Visibility Across the AI Lifecycle

True visibility must extend from the moment a new tool is procured in Rogers to the day it is decommissioned in Olathe. If you lose track of where a specific model is used within your workflow, you lose control of your proprietary data. True visibility requires seeing the data, the user, and the AI output simultaneously. This level of oversight ensures that your firm remains aligned with the NIST AI Risk Management Framework throughout the entire technology lifecycle.

Choosing "one partner" to handle both your security and your compliance simplifies this massive oversight burden. You shouldn't have to manage three different vendors just to see if your AI is behaving correctly. We provide enterprise-grade protection at a small business price point, giving you the same "Vigilant Guardian" oversight used by major corporations. According to a 2023 IBM report, the average cost of a data breach reached $4.45 million, making this level of visibility a financial necessity rather than a luxury.

The AI Governance Strategic Visibility Checklist for KC Owners

Most Kansas City business owners assume they know exactly what software is running on their office network. They are almost always wrong. Achieving ai governance strategic visibility starts with a sobering reality check regarding "Shadow AI" and the unauthorized tools your staff uses to make their lives easier. If you are not actively monitoring your endpoints, your proprietary data is likely already feeding a public model training set somewhere.

The first step requires a comprehensive discovery audit across every workstation and mobile device in your Kansas City office. You must identify every instance of unauthorized AI software, from browser extensions to standalone desktop apps. Once identified, you have to map data sensitivity tiers to see what AI is touching Protected Health Information (PHI) or Personally Identifiable Information (PII). This is not a "one and done" task; it is the foundation of a managed security posture.

Discovery and Data Mapping

You should utilize professional vulnerability assessments to locate every hidden AI integration within your environment. It is vital to categorize these tools into three buckets: Generative AI for content creation, Analytical AI for data processing, and Embedded AI found in existing software updates. For law firms in Tulsa, this mapping must extend to client discovery data to ensure that automated review tools do not violate attorney-client privilege or data residency requirements. A 2023 Stanford University study found that large language models can hallucinate or provide incorrect legal citations up to 50 percent of the time, making this mapping critical for professional liability.

Every AI tool requires a signed Business Associate Agreement (BAA) if it interacts with regulated data. You cannot rely on a standard "Terms of Service" click-through agreement to satisfy HIPAA 45 CFR § 164.308(a)(1)(ii)(A) requirements for risk analysis. Your endpoint monitoring must be configured to flag AI-related data exfiltration the moment a user attempts to paste sensitive data into an unapproved prompt. This is the difference between a static compliance document and an active compliance program that generates evidence of protection.

Enforcement and Human Oversight

Your Kansas City office manager must implement a "Human-in-the-Loop" protocol for every AI-generated output. Staff training should focus on the uncomfortable truth that AI is a "black box" that prioritizes plausibility over accuracy. In a real-world scenario, an Overland Park medical clinic recently discovered a staffer using a free AI tool to summarize patient intake notes. Because no BAA was in place, this constituted a reportable breach under the HITECH Act. Organizations that cannot afford to get this wrong must move beyond "Acceptable Use" memos and implement technical blocks.

The most effective actionable step is blocking high-risk AI domains at the firewall level immediately. This forces employees to use vetted, secure alternatives that your IT partner has already cleared for compliance. You are either in control of your data flow or you are a headline waiting to happen. There is no middle ground when it comes to regulatory scrutiny in the age of automation.

Stop guessing about your network's hidden risks and find out where you actually stand with a professional assessment.

Securing Your Firm’s Future with Managed AI Governance

Regulated firms in Kansas City often operate under a dangerous assumption. They believe their size makes them invisible to sophisticated threats or regulatory scrutiny. Real ai governance strategic visibility proves otherwise. Most local networks are currently leaking sensitive data through unmonitored AI tools right now.

BoTech acts as the critical bridge for Lee’s Summit businesses that need enterprise protection without the bloated corporate ego. We serve organizations that cannot afford to get this wrong. A single HIPAA violation involving AI data can cost a practice $71,162 per violation according to 2024 OCR penalty adjustments. You need 24/7 Managed Detection and Response (MDR) to catch these leaks before they become headlines.

Visibility is not a one-time project. It's a continuous state of vigilance that requires constant adjustment. Your AI oversight must be as persistent as the threats you face. We provide the technical backbone that allows you to focus on your clients while we handle the digital gatekeeping.

The BoTech Advantage: Veteran-Owned Discipline

Military-grade precision isn't just a marketing slogan for us. It's how we approach AI oversight for our neighbors in the KC metro area. We utilize a flat rate model that brings high-end security to the local level. You can access our Managed Security Services for constant monitoring that never sleeps. This eliminates the enterprise ego while maintaining the safety standards your firm requires to stay solvent.

Our approach removes the guesswork from your security budget. You get a partner that takes ownership of the outcome rather than just billing for hours. We focus on generating the ongoing evidence required by regulators like the SEC or HHS. A document on a shelf won't save you during an audit, but a managed program will.

Next Steps: Find Out Where You Actually Stand

You should start by downloading our local AI policy template to set baseline rules for your staff. This gives you a starting point for internal accountability. Be prepared for some uncomfortable truths during this process. Your network is likely noisier than you think it is.

Most owners discover their employees are already using high-risk AI tools without any encryption or oversight. This shadow IT creates a massive liability for your professional license. Take the final step and find out where you actually stand with a free AI risk assessment today. We'll show you exactly what's crossing your perimeter so you can stop guessing about your security.

Take Control of Your Firm's AI Future

Kansas City firms often mistake a written policy for actual protection. A static document doesn't stop an employee from feeding sensitive patient data or legal briefs into an unsecured LLM. You need a living program that tracks every tool in use across your network. Achieving ai governance strategic visibility means moving past dangerous assumptions and seeing your data flows in real time.

BoTech is veteran-owned and operated. We specialize in the rigid requirements of HIPAA, SOC 2, and PCI DSS for organizations that cannot afford to get this wrong. Our team provides 24/7 Managed Detection and Response to ensure your security posture remains active rather than theoretical. Most firms believe they're covered. Most are not.

The first step is identifying the gaps you don't know exist. You can't manage what you can't see. Take a moment to find out where you actually stand with a free AI risk assessment. It's time to build a foundation that holds up under regulatory scrutiny and protects your professional reputation.

Frequently Asked Questions

What is the difference between AI governance and basic cybersecurity?

Cybersecurity focuses on locking the doors of your network, but AI governance manages what the people inside are doing with your sensitive data. Basic security tools like firewalls don't monitor the specific prompts your team sends to a cloud based model. You need ai governance strategic visibility to see where your data goes once it leaves your protected network. Dedicated specialists like CyberOne provide Managed Extended Detection and Response (MXDR) to ensure your Microsoft Security stack is equipped for these modern challenges. Most firms assume their current stack covers this. It doesn't.

Does HIPAA explicitly regulate the use of AI in medical practices?

HIPAA does not mention Artificial Intelligence by name, but the Security Rule under 45 CFR § 164.308 requires a risk analysis for all electronic protected health information. If you feed patient data into an AI tool, that technology becomes part of your regulated environment. You're responsible for that data regardless of the software used. Failing to document this risk is a direct violation of the 1996 Act.

How can I tell if my employees are using ChatGPT without permission?

You can identify unauthorized use by monitoring DNS logs for traffic to openai.com or by using endpoint detection software to flag specific browser extensions. A 2023 study by Fishbowl found that 43 percent of professionals use AI tools without telling their supervisors. If you don't have a technical block or a clear policy, your employees are likely using it right now. This creates shadow IT that bypasses your security controls.

Is a Business Associate Agreement (BAA) enough to make an AI tool compliant?

A BAA is a legal requirement under HIPAA, but it's not a complete solution for AI compliance. The agreement only establishes that the vendor promises to protect data; it doesn't ensure your staff uses the tool correctly. You still need internal controls to prevent the input of prohibited identifiers. Compliance is an ongoing process of generating evidence, not just signing a single document and walking away.

Can AI governance help my Kansas City law firm pass a SOC 2 audit?

AI governance is essential for a SOC 2 audit because it addresses the Common Criteria related to risk management and third party oversight. Auditors now look for how organizations manage sub processors, including the AI models integrated into your legal research tools. Implementing ai governance strategic visibility allows you to prove you have oversight of these automated workflows. Without this evidence, your firm risks a qualified opinion on its report.

What are the biggest AI risks for small businesses in the KC metro area?

The primary risk for Kansas City firms is the accidental leakage of proprietary intellectual property into public training models. Local clinics and law offices are also prime targets for social engineering attacks enhanced by AI generated deepfakes. The FBI reported that business email compromise cost organizations 2.9 billion dollars in 2023. Small businesses often lack the dedicated oversight to catch these sophisticated scams before the money leaves the bank.

How much does it cost to implement an AI governance program?

The cost of a governance program is a fraction of the 4.45 million dollars IBM cites as the average price of a data breach. You're paying for the prevention of a disaster that often kills small firms. Organizations That Cannot Afford to Get This Wrong view this as a necessary cost of doing business in a regulated industry. It's the difference between a predictable monthly management fee and an unpredictable, business ending catastrophe.